How to validate terraform script and run security static code analysis as a part of Azure DevOps CI/CD pipeline

In my previous post, I have described how to create and test SQL Server and required infrastructure in Azure. In this post, we will update the build pipeline to validate terraform syntax and introduce SAST (Static Application Security Testing) to validate infrastructure as code (IaC) scripts before publishing artifacts. There are many static analysis security scanner available for terraform scripts like tfsec, terrascan or Snyk. In our example we will use tfsec as part of our DevSecOps pipeline. All scripts have been updated to Terraform 1.0.1 and are available in the DBAinTheCloud GitHub repository.

Build pipelines

For demonstration purposes we will use scripts from my previous post and will update the build pipeline. We will add steps to validate terraform scripts, run security scans and publish results to Azure DevOps.

Validate terraform script

Terraform is coming with a built-in mechanism to validate script and we will use it in our pipeline. First we have to initialise terraform terraform init) and then run validation (terraform validate) with following steps.

- bash: |

terraform init \

-backend-config "key=dso1/core.terraform.tfstate" \

-backend-config "subscription_id=$SUBSCRIPTION_ID" \

-backend-config "tenant_id=$TENANT_ID" \

-backend-config "client_id=$CLIENT_ID" \

-backend-config "client_secret=$CLIENT_SECRET" \

workingDirectory: '$(Build.SourcesDirectory)/11-tf-security-tests/infrastructure'

displayName: Terraform Init

env:

SUBSCRIPTION_ID: $(subscription_id)

TENANT_ID: $(tenant_id)

CLIENT_ID: $(client_id)

CLIENT_SECRET: $(client_secret)

- bash: |

terraform validate

workingDirectory: '$(Build.SourcesDirectory)/11-tf-security-tests/infrastructure'

displayName: Terraform Validate

The entire script is available in my git repository here.

Please keep in mind that terraform is validating syntax and environment build might still fail due to errors reported by Azure API.

Security IaC analysis

For scanning terraform scripts we will use tfsec a static analysis security scanner for Terraform code. We will be running it on a Microsoft build agent and to avoid the installation process we will run it in a docker container. We will install Docker using a dedicated step provided by Microsoft, then run a container provided by tfsec with a mounted folder containing our terraform scripts.

- task: DockerInstaller@0

inputs:

dockerVersion: '17.09.0-ce'

- bash: |

chmod -R 777 infrastructure

workingDirectory: $(Build.SourcesDirectory)/11-tf-security-tests

displayName: Update permissions - folder

- bash: |

docker run --rm -t -v "$(pwd):/src" tfsec/tfsec /src -f junit --out /src/TEST-ftsec-results.xml --tfvars-file terraform.tfvars --soft-fail

workingDirectory: '$(Build.SourcesDirectory)/11-tf-security-tests/infrastructure'

displayName: Run tfsec scan

- bash: |

sudo chown vsts:docker TEST-ftsec-results.xml && sudo chmod 777 TEST-ftsec-results.xml # && ls -las

workingDirectory: '$(Build.SourcesDirectory)/11-tf-security-tests/infrastructure'

displayName: Update permissions - test results

We will use the output parameter to generate an xml file with test results (-f junit –out /src/TEST-ftsec-results.xml) and we will upload it to Azure DevOps.

- task: PublishTestResults@2

displayName: Publish Test Results

inputs:

testResultsFormat: 'JUnit' # Options: JUnit, NUnit, VSTest, xUnit, cTest

testResultsFiles: '**/TEST-*.xml'

A similar approach can be used to run a scanner provided in a docker container provided by terrascan or Snyk.

Congratulations!

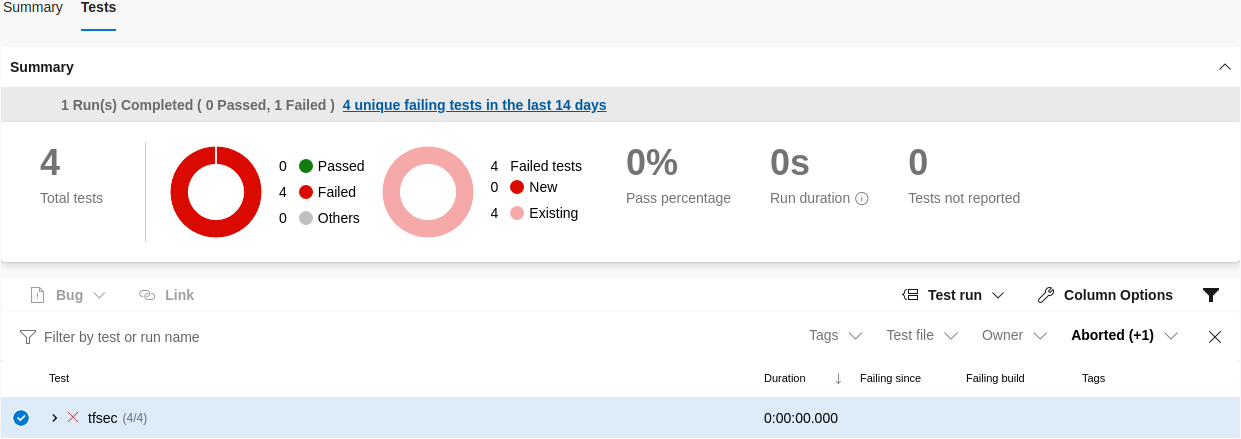

We have successfully run the build pipeline, validated terraform scripts and assessed environment configuration from a security point of view. Now you can review recommendations and improve them or accept them as a security exception.

Our pipeline is running in “discovery mode”. The build is not failing when there are errors or warnings reported during the security scan. This configuration can be changed in the publish test results step.

Coming next …

We will create a much more lean environment with an SQL server. The current lab has many moving parts and it takes more than 25 minutes to build it. If we are testing SQL server only and do not require Active Directory we can streamline the process.